An experimental crop yield estimator travels down an orchard row at a speed of about half a mile per hour. Middle: The raw image taken by the crop yield estimator’s cameras. Right: The yellow circles represent the locations of apples, as calculated by the software.

PHOTOS COURTESY CARNEGIE MELLON UNIVERSITY

Robotics experts at Carnegie Mellon University in Pennsylvania are confident they can develop a machine able to count apples in orchards in order to provide growers with accurate crop estimates and yield maps. And it may be feasible for the machine to estimate the size of the apples.

Dr. Stephen Nuske, project scientist at Carnegie Mellon’s Field Robotics Center, said a precise estimate of the crop could help growers and packers better plan for harvest and know exactly how many bins they will need in the various parts of the orchard and how much transport and storage will be required.

“Knowing those yields can really optimize the efficiency of their operations,” he said. “The other big thing we think it can be useful for is reducing the variability of the orchards. By knowing where the fruit is, you can take actions in the orchard to increase your yields in underperforming areas.”

It might be possible, for example, to change nutrient, irrigation, or pruning strategies to increase production, he suggested.

The California company Vision Robotics began developing an automated apple crop estimation system six years ago with funding from the Washington Tree Fruit Research Commission. It was conceived as the first step in developing a robotic apple harvesting system. The company later was a cooperator in the national Comprehensive Automation for Specialty Crops project that was supported with federal funding. Now, Nuske and his colleagues, Dr. Sanjiv Singh and Dr. Marcel Bergerman, who are involved in the CASC project, have proposed an alternative crop estimation system. They have already worked on robotic crop estimation in strawberries, grapes, and other crops, and are confident that a commercial system can be developed.

Cameras

Cameras

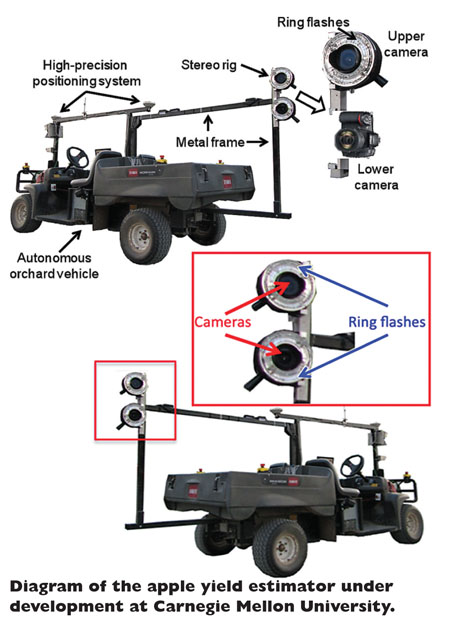

“We’ve done a lot of groundwork in having a hardware and software system capable of taking images of the trees and vines and detecting the fruit and counting it,” Nuske said. CMU’s crop estimation system’s hardware consists of two high-resolution cameras with wide-angle lenses and two ring flashes on an aluminum mount. Nuske and Singh mounted the system on an autonomous orchard platform that they developed, which can travel down the rows at a consistently slow speed (about half a mile per hour). However, the system could be mounted on a manually-driven vehicle.

As the system goes down the row, it photographs the trees, taking one image per second, which means it captures multiple images of each fruit as it goes by. A high-precision GPS device is used to determine the exact location of each apple and ensure an apple seen from both sides of the tree is not counted twice.

Nuske said it would be easy to calculate the size of the fruit. “All you need to know is the distance of the apple from the vehicle and the size of the apple in the image.”

The speed it travels down the row could be increased if it proves possible to have an accurate count with fewer images or if the cameras could capture images faster. There’s no reason, Nuske said, why it couldn’t ultimately operate ten times as fast.

Night

When the scientists began their crop estimation work on strawberries, they operated the system during the day, but found that it performed more consistently with controlled lighting at night. The images are in color. The software uses hue, saturation, and value to distinguish red apples from other objects in the orchard, such as wires, trunks, branches, and foliage. A different method, which uses color intensity, is used to detect green apples. Apples have a stronger green color than foliage. Light reflected from the objects in the image is used to distinguish the shape and location of the apples.

Results from orchard trials show that the robotic yield estimator is most accurate in high-density, trellised plantings with two-dimensional trees, where most of the fruit are visible. It is far less accurate in traditional plantings with widely spaced large trees where much of the fruit is hidden from view, or “occluded,” to use the scientific term.

“We can count all the visible apples,” Nuske said. “But there’s no method where you can count an apple that’s not visible.”

However, if the number of hidden apples is consistent, which was the case in the trials, the estimation system could be calibrated by having humans count all the apples on sample trees then calculating the percentage of apples the machine is missing.

This would not necessarily have to be done each year or for each orchard, Nuske said. Similar orchards might be able to use the same calibration factor. In their grape studies, they worked in two similar-styled vineyards—one in New York, and the other in California—and were able to use the same calibration in California as in New York without needing to take manual samples again.

The scientists tested the apple yield estimation system in 2011 with Red Delicious and Granny Smith apples at a Washington State University research orchard near Wenatchee. The estimate for red apples on a high-density system was quite accurate at 3.2 percent lower than an estimate made by humans counting each apple. They also tested it in a block of green apples, which had not been fruit thinned, had large clusters of apples and more foliage. As a result, many apples could not be detected because they were hidden by other apples or leaves. In that block, the machine estimate was 30 percent lower than the human count, but, after calibration, it was within 1.2 percent of the human count.

Further tests were conducted with Honeycrisp this September at Pennsylvania State University’s Fruit Research and Extension Center in Biglerville. Data are still being analyzed.

The four-year CASC project will end this year, but Nuske will continue to work on generic crop estimation systems for another three years with USDA funding. The National Wine and Grape Initiative is supporting the grape research and is interested in seeing a crop estimator commercialized.

Nuske suggests that not every grower would purchase a yield estimation system. Rather, a service company might buy one and do yield estimation for growers on a contract or service basis.

Leave A Comment